The 2026 Generative AI Guide: Balancing Cloud Power and Confidentiality

90% of companies expose their data to public AI. A panorama of cloud solutions, aggregators, local-first approaches, and Elosia’s hybrid model.

1. The 2026 AI Paradox

The Reality: Growing Dependence, Immense Risks

In 2026, artificial intelligence is no longer a trend it has become an indispensable workplace tool for organizations. According to a Celiveo study, 90% of companies expose their data daily to public AI models via employees’ personal accounts, often without their knowledge. Meanwhile, data breaches linked to LLMs have followed a worrying trajectory: AI-related security incidents surged by 50% in just one year, per DeepDive, while the global average cost of a data breach now reaches $4.88 million in 2024, up 10% from 2023.

This figure is just the beginning. Generative AI creates a new class of threats: Shadow AI. Your training data fuels future models. Your strategic prompts accumulate on servers outside the EU. Your confidential conversations risk prompt injection or unauthorized reuse. While we’ve never had access to such powerful tools, we’ve never faced so many risks.

Can We Really Trust the Cloud Giants?

The question posed by thousands of DPOs (Data Protection Officers) and IT leaders in 2026 is existential: Can we use GPT-5, Claude 4.5, or Gemini 3 Pro without compromising our strategic data?

The answer, according to regulatory authorities, is nuanced. OpenAI has indeed improved its GDPR compliance since 2023 by offering DPAs (Data Processing Agreements) and Enterprise plans. However, over 60% of data sent to ChatGPT contains personally identifiable information, and only 20% of users are aware of privacy settings. Worse still, free and non-Enterprise paid versions remain non-compliant with GDPR, with unlimited data retention and insufficient transparency.

Meanwhile, the EU AI Act mandates a “Fundamental Rights Impact Assessment” starting in 2026 for all high-risk AI systems, with fines of up to 7% of annual global turnover. The message is clear: responsibility lies with the company, not the cloud provider.

What This Article Covers

- Understanding the real risks posed by cloud giants and Shadow AI

- Exploring hybrid tools that attempt to reconcile power and security

- Discovering the local-first approach with Ollama and AnythingLLM

- Evaluating Elosia.ai, the platform promising a “third way” between total security and multi-model power

- Choosing the right AI stack for your risks and regulatory context

2. The Cloud Giants: OpenAI, Anthropic, Google – Raw Power and Strategic Risks

Next-Generation Models and Integrated Ecosystems

As of January 2026, the world’s three leading AI providers offer impressive capabilities:

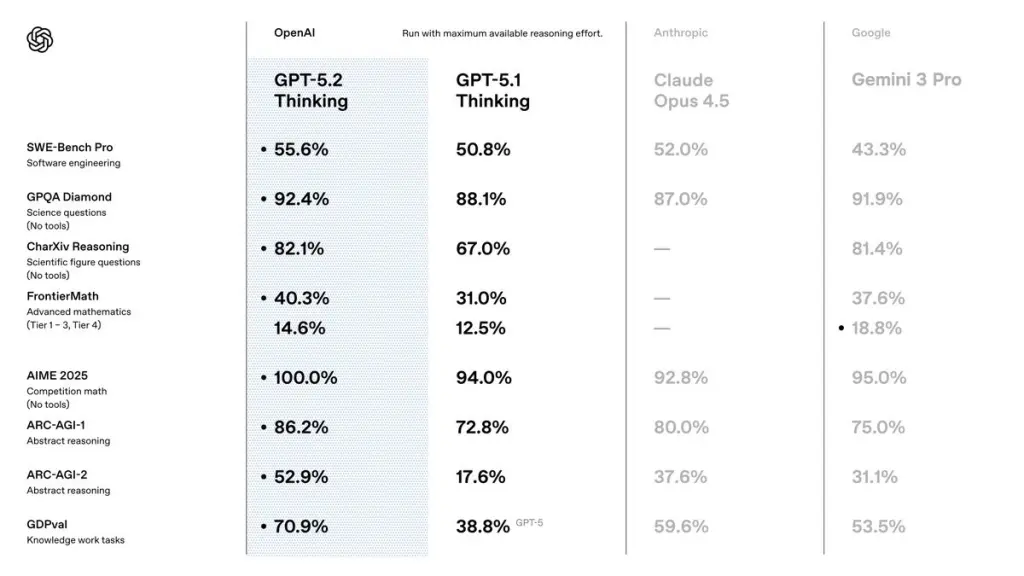

GPT-5.2 (OpenAI): A new unified model with adaptive reasoning. Exceptional performance in mathematics (100% on AIME 2025 without tools), 45% reduction in hallucinations with web search enabled, and improved speed thanks to intelligent routing. Context window of 400,000 tokens.

Claude Opus 4.5 (Anthropic): The undisputed leader in coding (80.9% on SWE-bench Verified). Context window of 200,000 tokens, offset by persistent memory between sessions. Particularly strong in complex multi-step tasks and “Computer Use” the ability to interact directly with a computer.

Gemini 3 Pro (Google): A natively multimodal model with a massive context window (1 million tokens ≈ 750,000 words). Seamless integration with Google Workspace. Deep Think mode for advanced reasoning. Elo score of 1501, the first to exceed 1500.

While these models offer unparalleled power, they raise a critical question: What about confidentiality?

The Core Problem: Shadow AI

Three risks converge in 2026.

1. Continuous Training and Data Retrieval

By default (unless using an Enterprise agreement), OpenAI, Anthropic, and Google use your data to improve their future models. This practice poses two major risks:

- Intellectual property theft: A confidential business strategy, a client audit report, proprietary code, anything copy-pasted into a public LLM potentially becomes training data.

- Unintentional exposure: An employee writes, “Our client X has a $10M budget in 2026”? That sentence joins the training datasets. Another LLM could reproduce this information.

2. Data Storage Outside the EU and Persistent GDPR Issues

Despite improvements, OpenAI, Anthropic, and Google’s servers are primarily located in the United States. Under GDPR, transferring data outside the EU requires a robust legal basis. However, the Schrems II ruling (July 2020) invalidated the Privacy Shield and complicated SCCs (Standard Contractual Clauses).

The consequence in 2026: 63% of ChatGPT data contains personally identifiable information. And under EU law, you remain responsible for compliance not the provider.

3. Prompt Injection and Server Log Leaks

A new class of cyberattacks is emerging: prompt injection. An attacker embeds hidden instructions in data the model must process, forcing the LLM to disclose sensitive information from previous context. The longer your prompt history accumulates, the greater the risk.

Summary Table: Strengths vs. Risks

| Criteria | GPT 5.2 | Claude 4.5 | Gemini 3 Pro |

|---|---|---|---|

| Mathematical power | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Code performance | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Multimodal | ⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Context window | ⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| GDPR compliance | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ |

| Default confidentiality | ⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐ |

Conclusion: These models are exceptional. But without additional controls, they are not suitable for strategic or GDPR-regulated data without strict contractual negotiations.

3. The Multi-Model Approach: Aggregators Like WritingMate and Mammoth.ai

The Value: Avoiding Vendor Lock-In

The 2026 trend is moving toward multi-model aggregators. WritingMate and Mammoth.ai promise to centralize GPT-5, Claude, Gemini, Mistral, and others in a single interface.

Advantages:

- Flexibility: Need persuasive text? Claude. Image generation? Gemini. Complex math? GPT-5.2. Access the best without multiplying subscriptions.

- Cost reduction: A single Mammoth.ai subscription (from €10–60/month) replaces three or four individual plans.

- Workflow continuity: Centralized history, organized projects, reduced friction.

- Protection against model changes: If an LLM degrades, switching to another takes just a few clicks.

Quick Comparison: WritingMate vs. Mammoth.ai

WritingMate: Premium positioning, strong in writing. Powerful Chrome extension, direct integration with Gmail and Docs. Standard cloud storage with some guarantees, but no native Zero Data Retention (ZDR). Excellent for marketing and writing teams. Pricing: ~€20–60/month.

Mammoth.ai: A more ambitious approach, offering a full-featured centralized platform. Access to 20+ models, document analysis, image generation, voice/dictation, and integrated web search. Interface can be complex for beginners but rich for power users. Pricing: €10 (basic) to €60/month (premium). Excellent value for versatility.

The Limitation: These Tools Are Still Just Aggregators

WritingMate and Mammoth.ai do not solve the confidentiality problem.

These platforms make LLM access easier, but your data still passes through the cloud giants’ servers (OpenAI, Anthropic, Google). Mammoth.ai adds a layer of abstraction, not a confidentiality barrier. While ZDR mode is sometimes used, data remains stored on cloud servers and is thus vulnerable to attacks.

The Zero Data Retention (ZDR) question is not central to their architecture. ZDR means your data is processed in memory and immediately destroyed never stored, never used for training.

4. Local-First: Ollama and AnythingLLM – Absolute Security at a High Technical Cost

Ollama: The Standard for Local Models

Ollama became the go-to tool in 2025–2026 for running LLMs on your personal machine.

- Download an open-source model (Llama 3, Mistral, Code Llama).

- Run it locally on your computer (CPU or GPU).

- No data ever leaves your machine.

Strengths

- 100% private security: Your data never leaves your computer.

- Marginal cost after setup: No monthly subscription.

- Full ownership: Transparency of open-source models.

Weaknesses

- High hardware requirements: Llama 3 70B requires 64+ GB RAM and ideally a powerful GPU (RTX 4090, H100).

- Latency: 2–5 seconds per simple prompt instead of milliseconds.

- Lower performance: Estimated 25–35% gap vs. Claude Opus 4.5 or GPT-5.2.

- Ongoing maintenance: GPU drivers, updates, troubleshooting.

AnythingLLM: The Perfect Interface for Local RAG

AnythingLLM transforms Ollama from a technical tool into a full-fledged work platform. Use case: ingest 100 client contracts as PDFs, vectorize locally, query in natural language, all locally.

Real-world use cases in 2025–2026:

- Due diligence: Analyze acquisition reports.

- Customer support: Respond using internal knowledge bases.

- Legal research: Query a contract database without GDPR risk.

- Onboarding: Train employees via questions on internal documentation.

Best setup: Ollama (LLM server) + AnythingLLM (RAG interface) = total security in 5 minutes.

The Trade-Off: Maximum Security, Limited Power

| Criteria | Local (Ollama / AnythingLLM) | Cloud (Claude/GPT/Gemini) |

|---|---|---|

| Data security | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ |

| GDPR compliance | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ |

| Raw power | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Speed | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Operational costs | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ |

| Maintenance | ⭐⭐ | ⭐⭐⭐⭐⭐ |

Verdict: Ollama + AnythingLLM is excellent for ultra-sensitive data and teams with IT resources. For everyone else, it’s a painful trade-off between security and productivity.

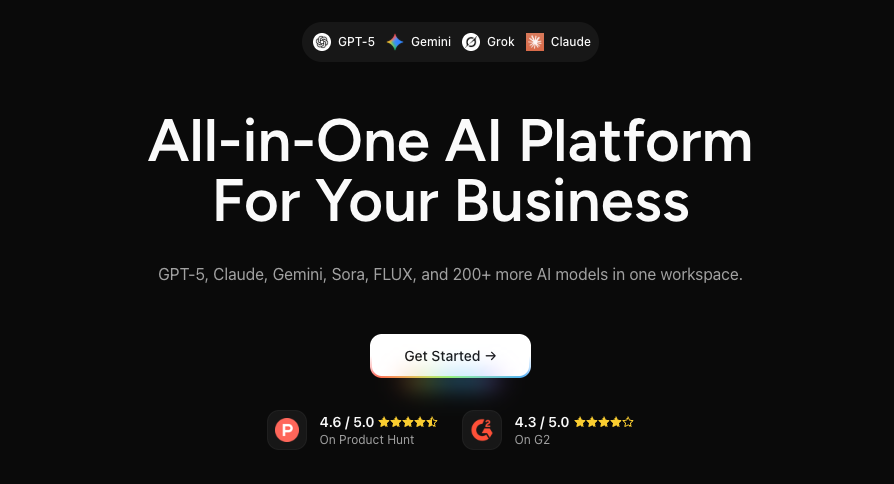

5. Elosia.ai: Multi-Model + Zero Data Retention + Browser-First Synthesis

The Positioning: Reconciling Incompatible Worlds

Elosia.ai emerges in 2026 with a bold promise: access the world’s best models (GPT, Claude, Mistral) in a secure interface, with a technical guarantee that no data is stored by providers.

It’s the “third way” between three dead ends:

- ❌ Cloud giants: Maximum power, vulnerable data.

- ❌ Traditional aggregators: Flexibility, but no ZDR or server-side storage.

- ❌ Local-first: Total security, but limited power and technical complexity.

Elosia combines the best of all three.

Pillar 1: Native Multi-Model Access

Unlike aggregators, Elosia isn’t just an abstraction layer. Its architecture is designed for model diversity from the ground up: GPT-5.2, Claude Opus 4.5, Gemini 3 Pro, Mistral, Llama 3. Each model remains directly accessible, without “signal degradation.” It also maintains transparency about each model’s data policy.

Pillar 2: The ZDR Shield (Zero Data Retention)

Elosia technically guarantees (not just contractually) that no data is stored by model providers.

- Ephemeral processing: Data is held in memory during processing, then erased immediately after response.

- Contractual ZDR agreements with major providers, accessible anytime for transparency.

- Local storage: All saved data is stored in a secure browser compartment.

- No persistent logs: Elosia only archives metadata (timestamps, models used), never prompts or responses.

Practical impact: You can use Claude 4.5 on sensitive client contracts without violating GDPR, because no persistent logs exist at Anthropic.

Pillar 3: Browser-First Innovation (WebGPU)

The third pillar sets Elosia apart: the ability to run models directly in the browser via WebGPU.

- Local execution of small models: Some prompts don’t need Claude. A small model runs in your browser, with zero cloud transmission.

- Intelligent hybridization: All messages are vectorized locally to provide relevant context without sending all information to the LLM.

- Frictionless confidentiality: No need to install Ollama or a GPU. Everything works in the browser, even offline.

Cumulative Advantages of Elosia

- Power of cloud giants + contractual ZDR

- Unified interface without cognitive friction

- Security by design

- Native GDPR compliance

- No IT maintenance

- Hybrid local + cloud = optimal performance

6. Conclusion: Which AI Stack Should Your Company Choose in 2026?

| Matrix | Cloud-First | Aggregator | Local-First | Elosia |

|---|---|---|---|---|

| Data security | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Ease of use | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐ | ⭐⭐⭐⭐⭐ |

| Average monthly cost | €20 – 200 | €10 – 80 | €0 | €20 – 60 |

| GDPR compliance | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Average power | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Features | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐ |

Recommendations by Profile

Startup / SME without sensitive data → Pure cloud (GPT-5 + Cursor for code)

- Justification: Low cost, no friction, small team.

- Use case: Marketing agency, creative studio.

Enterprise with sensitive client data → Elosia.ai

- Justification: ZDR + power + native compliance.

- Use case: Law firm, accounting firm, web agency with regulated clients.

Tech group with IT resources → Ollama + AnythingLLM (local) + Claude API (cloud)

- Justification: Maximum security for ultra-sensitive data, cloud for non-confidential tasks.

- Use case: Software publisher, AI agency, strategic consulting.

Decentralized team needing flexibility → Mammoth.ai

- Justification: Best versatility-to-price ratio, no IT complexity.

The 2026 Regulatory Context

- €1.15 billion in GDPR fines in 2025 for compliance violations.

- Up to 7% of turnover possible for AI Act non-compliance (starting 2026 for high-risk systems).

- Strengthened Article 32 GDPR: Enhanced security obligations for AI data.

- Privacy by Default becoming Privacy by Design.

The message is clear: The cost of carelessness far exceeds that of a secure stack.

Final Checklist

Before switching solutions, ask yourself these questions:

- Do you have a signed DPA (Data Processing Addendum) with your AI provider?

- Does your data transit outside the EU?

- Do you have a contractual or technical ZDR guarantee?

- Does your AI provider train its models on your data?

- Have you documented a DPIA (Data Protection Impact Assessment) for each AI system?

- Have your employees received training on Shadow AI risks?

- Do you have a Shadow IT policy?

- Does your cyber insurance cover AI breaches?

If three or more answers are “no,” your stack poses a risk.